OpenAI introduces its new GPT-5.4 mini and nano models, while Mistral 4 brings Mistral Small 4 to market. They are designed for better performance and cost savings in programming and sub-agent tasks.

According to the announcement from OpenAI, GPT-5.4 mini and nano are “the most capable small models to date.” They focus on improving speed and efficiency in AI workflows and are expected to be particularly useful for systems requiring fast and reliable responses, such as programming assistants and sub-agents.

Better performance for programming tasks

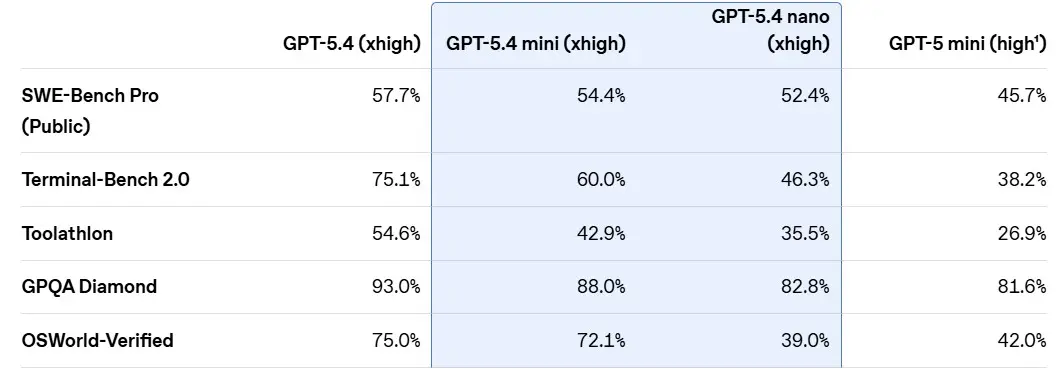

According to benchmarks conducted by OpenAI, GPT-5.4 mini outperforms its predecessor, GPT-5 mini, in programming tasks. It offers greater efficiency in operations, codebase navigation, and low-latency debugging, making it suitable for developers who need to work quickly and cost-effectively.

While GPT-5.4 nano may be cheaper, this model is optimized for tasks where speed and cost are critical. It is particularly well-suited for simple support tasks such as classification and data extraction.

Multimodal applications

According to OpenAI, GPT-5.4 mini is not only strong in programming tasks but also in multimodal applications. The model can handle complex user interfaces and images quickly and effectively. In benchmarks such as OSWorld-Verified, it comes close to the performance of the larger GPT-5.4 model.

Additionally, GPT-5.4 mini offers excellent capabilities as a sub-agent. It can collaborate with larger models within systems like Codex, handling smaller sub-tasks more quickly and efficiently.

Availability and pricing

GPT-5.4 mini is now available via API, Codex, and ChatGPT. It supports both text and image input, has a 400k context window, and costs $0.75 per million input tokens and $4.50 per million output tokens. GPT-5.4 nano is exclusively available via the API and costs $0.20 per million input tokens and $1.25 per million output tokens.

Mistral Small 4

Mistral is also simultaneously releasing a new multimodal model called Mistral Small 4. A new feature of this model is that the user can determine how much reasoning capacity the model spends on a task. The easier the task, the less time is needed to complete it. According to Mistral, this should skip unnecessary steps and reduce both duration and cost.

The model is designed for developers, businesses, and researchers, and is expected to perform well in code automation, general chat assistants, document understanding, mathematics, research, and complex reasoning tasks. Mistral Small 4 is available via API and AI Studio, Hugging Face, and build.nvidia.com.