Generatieve AI is in korte tijd uitgegroeid tot een vaste waarde in tal van bedrijven. Maar wat als je AI niet de juiste info kan aanreiken? Katy Fokou en Bert Vanhalst van Smals Research geven uitleg in een webinar.

Chatbots, internal assistants, and automatic document analysis are no longer a thing of the future. Yet many teams quickly run into a problem: large language models (LLM’s) do not have the current or company-specific data needed to generate truly valuable answers.

This is where Retrieval-Augmented Generation, or RAG, comes into play. AI specialists from Smals recently discussed during a webinar what RAG is, why it has become so popular, which choices are crucial during implementation, where the biggest pitfalls lie, and which practical lessons you can take away.

What is RAG and why is it so popular?

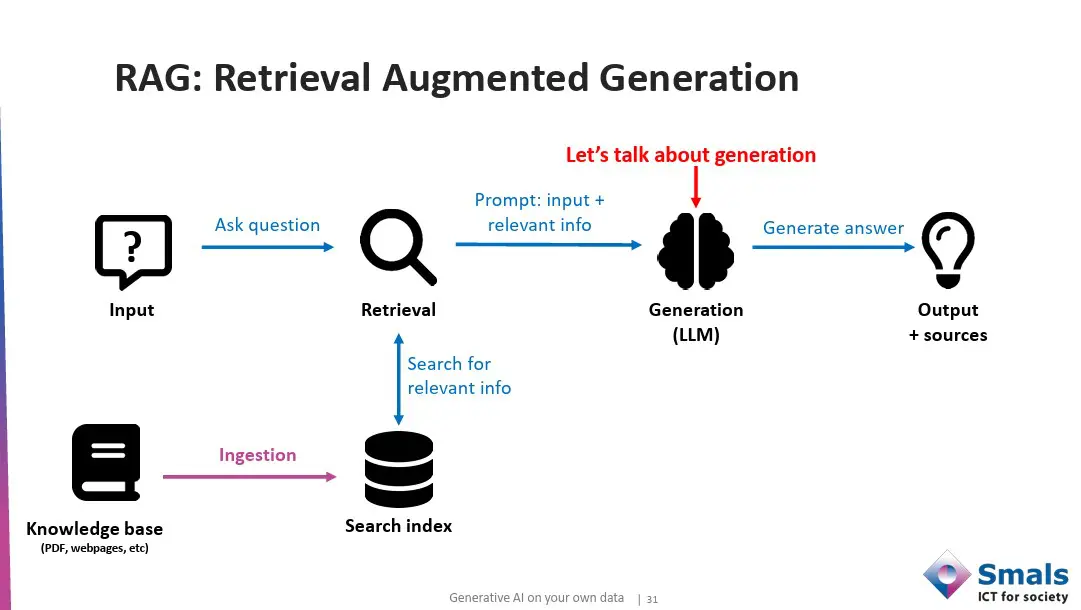

RAG stands for Retrieval-Augmented Generation. It is an architecture in which a language model does not only rely on its pre-trained knowledge, but also retrieves relevant information from external data sources. This retrieved information is added to the prompt, so that the model can generate an answer that is based on current and company-specific data.

In practice, the process takes place in three steps: data input, retrieval, and generation. First, documents, web pages, transcripts, or databases are collected via data ingestion and then cleaned and split into smaller text fragments or chunks. This is necessary to keep costs low. These chunks are converted to vectors and stored in a vector store. Then, for each user question, the system searches for the most relevant pieces of information, using vector search. Finally, the language model uses this context to generate a correct answer.

The popularity of RAG is easy to explain. The main reason to implement a RAG is to use reliable and controlled sources to answer a question. Yet, even with a RAG, data can be sent to an external model. If there is sensitive data, RAG is performed with a model that runs locally and not in the cloud. With RAG, they can reuse their existing knowledge, keep answers up-to-date, and at the same time create transparency by providing sources.

With Retrieval Augmented Generation, a language model can generate answers that are based on current and company-specific data.

Important design choices: prompts and models

Although it seems like an easy concept, a good RAG requires the right technical and content choices.

- Importance of good prompts

The prompt is the step between retrieval and generation. It is not enough to only pass on a question and a few text fragments. A good prompt contains clear instructions about what the model may and may not do. For example, you can say whether the model should limit itself to the information provided, indicate when information is missing, use a certain writing style, and structure the answer in a fixed way.

Specifying the output format is also important. Should the answer be in one paragraph? Should sources be explicitly mentioned? Is a table needed? The more details, the more controllable and consistent the output will be.

- Choosing the right LLM

Not every language model performs equally well in a RAG context. In the Smals Research webinar, various models such as GPT-4o, GPT-5, Mistral Large, Claude Sonnet 3.7 and Gemini 2.5 Flash were evaluated on criteria such as accuracy, latency, cost price, and answer quality. Gemini and Claude score better on complex questions, but are also more expensive and slower. Smaller or faster models are often sufficient for simple FAQ applications.

In addition, privacy plays a role. Open-source models offer more control over data, but require their own infrastructure and additional security measures. Commercial models are easier to integrate, but bring dependency and costs with them. The “best” choice therefore strongly depends on the use case.

Retrieval: underestimated success factor

A common mistake is to focus on the language model, while retrieval is at least as important. If the correct information is not retrieved, the model cannot generate a correct answer either.

- Keyword search, semantic or hybrid?

There are different search strategies. Classic keyword search works well for exact terms, but not for synonyms or variations. Semantic search uses vector embeddings to compare meaning. In practice, a hybrid approach, i.e. a combination of the two, often yields the best results.

- Precision or recall?

Retrieval is about the balance between precision (how many retrieved pieces are relevant) and recall (how many relevant pieces are actually found). For RAG, recall is usually more important: missing crucial information gives a less correct answer and more extra irrelevant text, which the language model can often ignore.

- Reranking and filtering

To further increase the quality, results can be re-ranked with specialized reranking models. Metadata filters, threshold values, and query reformulation also provide better results. This does require extra computing power and engineering work.

read also

AI is menselijk: even vergeetachtig en eenvoudig om de tuin te leiden

Pitfalls: where does it often go wrong?

Of course, there are also common pitfalls when using RAG.

- Evaluation is complex

Evaluating a RAG system turns out to be more difficult than expected. Answers are non-deterministic: there can be several correct answers to the same question and then the quality is subjective. Is an answer good because it is complete, or because it is concise?

To evaluate, two categories of metrics are used: metrics with reference – where the generated output is compared with a predetermined reference output or reference context – and metrics without such a reference. This examines whether the LLM has hallucinated and how accurate the answer is based on the information provided.

Automatic evaluation systems, in which a language model assesses the generated output (“LLM-as-judge”), provide scalability, but do not always match human assessments. Human evaluation therefore remains indispensable, especially for critical applications.

- Guardrails are necessary, but not watertight

Guardrails must prevent a system from producing unwanted or harmful output. Think of privacy breaches, hallucinations, inappropriate language, or off-topic questions. These security layers can be applied at various levels: at input, in the prompt (instructions), via rule-based filters, or with separate AI classifiers. Yet guardrails do not provide a full guarantee. Prompt injections, creative circumventions, and new attack patterns remain a risk. Continuous monitoring and iterative improvement are therefore essential.

- RAG is not a one-size-fits-all solution

One of the most important lessons from the webinar is that RAG should always be tailored to your needs. An internal search assistant has different requirements than a public chatbot. Sectors with high accuracy requirements, such as medical or legal applications, also require extra caution, specialized models, and human intervention.

read also

AI adoption is increasing faster than awareness of security

Practical takeaways: how to get started with RAG yourself

Anyone who wants to get started with RAG can follow a number of concrete guidelines.

- Start with the data

The quality of your data directly determines the quality of the output. It is important that you know which data you are using to gain insight into structure, completeness, and consistency. Pay attention to cleaning, deduplication, and removing sensitive information. “Garbage in, garbage out” remains true.

- Optimize iteratively

You don’t build a RAG system perfectly in one go. Optimization is an iterative process in which you continuously adjust retrieval, chunks, prompts, and evaluation. Small adjustments can have a big effect on the final quality.

- Match accuracy to the use case

Not every application needs to be equally reliable. For an internal research tool, a conceptually correct answer may suffice, while a chatbot must be almost error-free. Determine in advance what the impact of a wrong answer is and adjust your architecture accordingly.

- Combine man and machine

Automatic evaluation and guardrails are useful, but human control remains crucial. A hybrid approach with human feedback and supervision is highly recommended, especially for sensitive applications.

Conclusion

RAG is useful for using generative AI with your own data and thus generating more relevant answers, but it is not a magical solution. Success depends on data quality, good retrieval strategies, thoughtful prompt design, and realistic expectations.

Companies that want to start with RAG can best start small, experiment with limited user groups, and refine the system step by step.