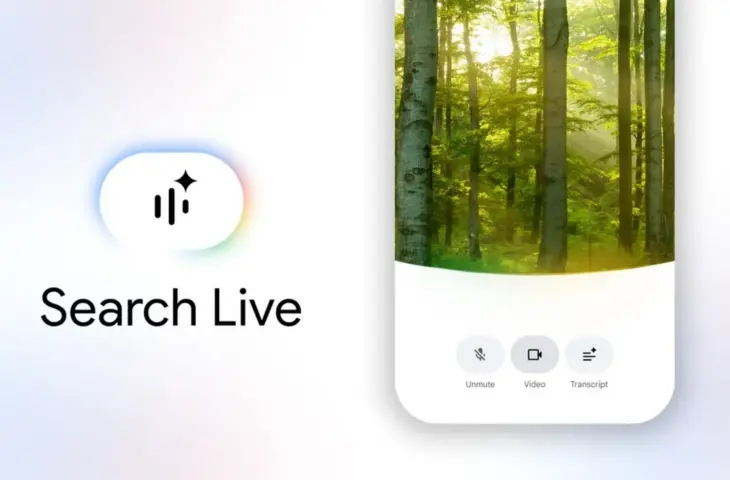

Google is rolling out its interactive AI search experience “Search Live” globally. The feature supports both voice and camera use within AI Mode.

Google has announced that its AI-driven conversational search feature, Search Live, is expanding worldwide to all countries where AI mode is available.

Search becomes a conversation

With Search Live, users can not only type search queries but also talk to the search engine. Via the Google app on Android and iOS, you can ask questions with your voice and receive spoken answers, after which you can further explore the conversation with follow-up questions.

The technology runs on the new Gemini 3.1 Flash Live model, which according to Google ensures more natural conversations and is multilingual by default. Users can therefore communicate with the search function in their own language.

Camera as extra context

In addition to voice, Google is also integrating visual input. Users can turn on their camera so that Search Live sees what they see. According to Google, this would make it possible to get help with practical tasks, such as assembling furniture or identifying objects. The feature also works with Google Lens, allowing you to start an interactive AI conversation directly from camera mode about what is on screen.

With this expansion, Google Search is shifting further from a classic search engine to an assistant that understands what you mean, sees what you see, and actively thinks along. According to Google, the combination of voice, image, and AI makes searching less dependent on exact keywords and more focused on context and interaction.